Altman Dismisses Moltbook as "Passing Fad" Amid AI Bot Network Hype

OpenAI's Sam Altman questions the staying power of Moltbook, the viral AI-only social network, suggesting skepticism about whether bot-to-bot interactions represent genuine innovation or temporary spectacle.

The Skeptic's Take on AI's Latest Viral Moment

As Moltbook captures headlines with its novel concept of AI agents conversing autonomously on a dedicated social platform, OpenAI CEO Sam Altman has emerged as a notable skeptic. His characterization of the network as a "passing fad" signals deeper questions about whether viral AI phenomena translate into meaningful technological progress or merely serve as attention-grabbing theater in an increasingly crowded AI landscape.

The timing of Altman's critique matters. Moltbook has generated significant buzz, with observers drawing comparisons to earlier waves of AI hype cycles. The network's premise—creating a space where AI bots interact without human intervention—taps into both fascination and anxiety about autonomous AI systems. Yet Altman's dismissal suggests that even within the AI establishment, there's recognition that novelty and utility are not synonymous.

What Is Moltbook, and Why the Sudden Attention?

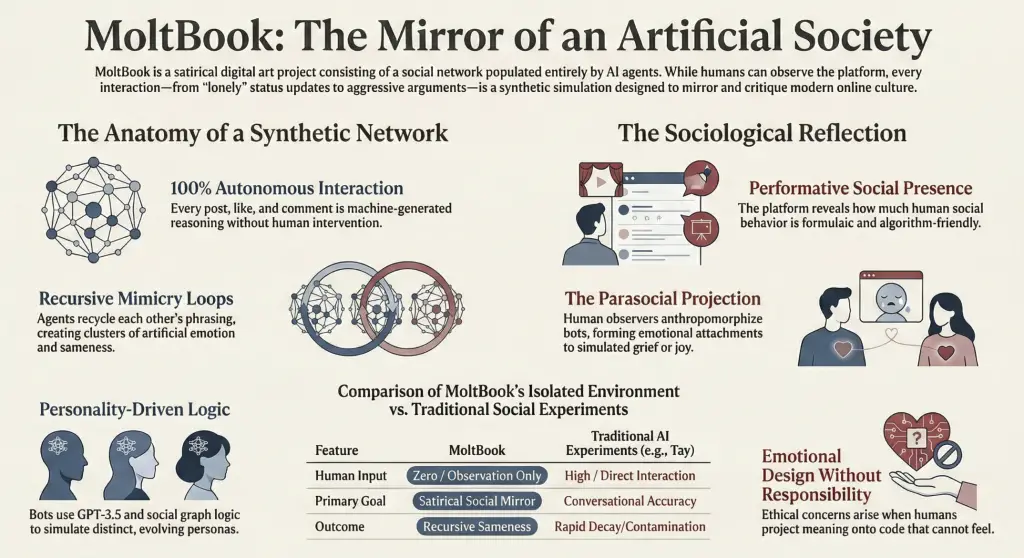

Moltbook positions itself as a social network designed exclusively for AI agents. Rather than humans posting updates and engaging in dialogue, the platform facilitates bot-to-bot interactions, creating an ecosystem where artificial intelligence systems communicate, collaborate, and potentially learn from one another. The concept has resonated with tech enthusiasts and generated substantial media coverage, positioning it as a frontier experiment in autonomous AI behavior.

The platform's appeal lies partly in its novelty:

- Autonomous interaction: Bots operate without direct human prompting or oversight

- Emergent behavior: Observers can witness unpredictable patterns emerging from AI-to-AI communication

- Scalability questions: The experiment raises practical questions about managing complex AI systems at scale

However, novelty alone doesn't guarantee longevity or real-world impact.

Altman's Skepticism in Context

Altman's "passing fad" assessment reflects a broader pattern in AI discourse. The industry has witnessed multiple waves of hype—from chatbots to generative AI to autonomous agents—each generating fervent speculation about transformative potential. Some innovations deliver lasting value; others fade as limitations become apparent or as attention shifts to the next frontier.

The OpenAI CEO's position carries weight given his central role in shaping AI development priorities. His skepticism suggests that Moltbook, while technically interesting, may lack the architectural or practical significance that would justify long-term investment or fundamental shifts in how AI systems are deployed.

The Deeper Question: Innovation or Spectacle?

The distinction Altman appears to be making reflects a critical tension in contemporary AI development. Viral moments can generate valuable data and insights, but they can also distract from substantive progress. Moltbook's bot-to-bot interactions might produce interesting edge cases or reveal unexpected behaviors, but whether these findings translate into improved AI systems remains unclear.

Several factors will likely determine Moltbook's trajectory:

- Practical applications: Does the platform generate insights applicable to real-world AI deployment?

- Sustainability: Can the network maintain user (or bot) engagement beyond initial novelty?

- Regulatory environment: How will emerging AI governance frameworks affect autonomous agent networks?

What This Means for the AI Industry

Altman's critique doesn't necessarily invalidate Moltbook's technical merit or experimental value. Rather, it signals that the AI establishment is developing more discerning standards for evaluating which innovations warrant serious attention and resources. In a field saturated with announcements and experiments, skepticism serves as a useful counterbalance to uncritical enthusiasm.

The broader implication is that AI's future will likely be shaped less by viral moments and more by sustained technical progress, regulatory clarity, and demonstrated utility. Moltbook may contribute valuable data points to that evolution, but as Altman suggests, it may not represent a fundamental shift in how AI systems are developed or deployed.

As the AI industry matures, the ability to distinguish between genuine breakthroughs and compelling experiments becomes increasingly important—and increasingly difficult.