Anthropic Launches Security Center for Claude Code Amid AI Coding Arms Race

Anthropic is rolling out a dedicated Security Center for Claude Code, introducing sandboxing and autonomous execution safeguards as the race for secure AI-powered development tools intensifies.

The Security Imperative in AI-Powered Development

The competition for dominance in AI-assisted coding is heating up, and security has become the battleground. As developers increasingly rely on AI agents to write and execute code autonomously, the risk surface expands dramatically. Anthropic is addressing this challenge head-on with a new Security Center for Claude Code, introducing layered safeguards designed to contain and monitor autonomous code execution.

This move signals a critical shift in how AI development tools approach risk management. Rather than treating security as an afterthought, Anthropic is embedding it into the core architecture of its coding assistant—a necessary evolution as Claude Code continues to evolve with features like checkpoints and subagents.

What the Security Center Delivers

The Security Center introduces several key capabilities:

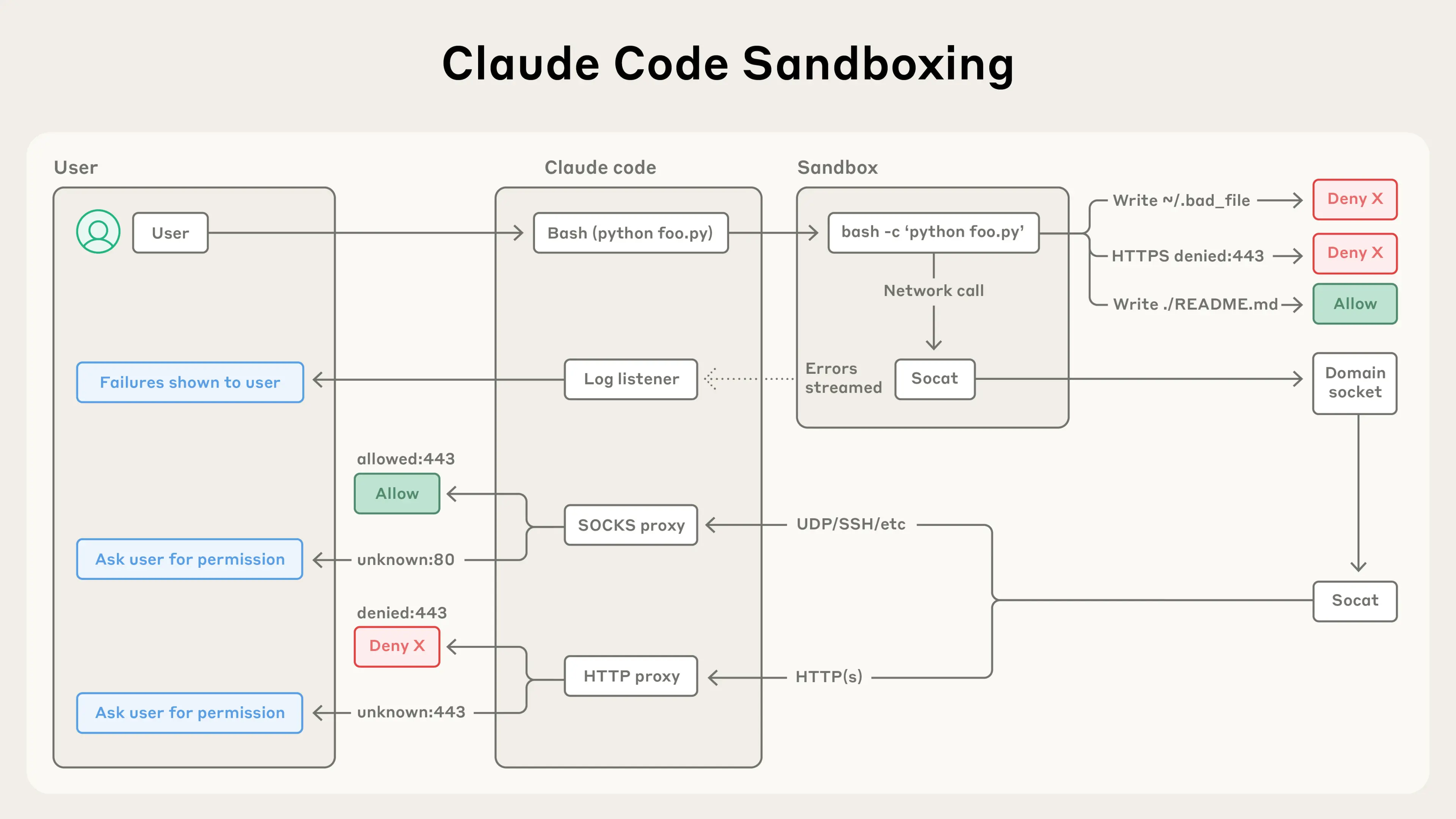

- Sandboxing Infrastructure: Isolates code execution environments to prevent malicious or errant code from affecting host systems

- Autonomous Execution Monitoring: Real-time oversight of agent-driven code operations with granular control and audit trails

- Constitutional AI Integration: Leveraging Anthropic's constitutional AI framework, the system enforces behavioral guardrails that align code generation with security best practices

- Transparency and Control: Developers maintain visibility into what Claude Code is executing and can intervene at critical junctures

According to Anthropic's recent agentic coding trends report, organizations are increasingly concerned about the risks of autonomous agents—particularly around unintended side effects and privilege escalation. The Security Center directly addresses these pain points.

The Broader Context

This initiative arrives as the AI coding landscape becomes increasingly competitive. Claude Code itself has undergone significant evolution, expanding from a simple code-writing assistant to a more autonomous agent capable of managing complex development workflows. The addition of security-first features reflects a maturation of the product category.

Anthropic Labs, the company's innovation division, has been exploring advanced use cases for Claude Code, including multi-step agentic workflows. The Security Center is the natural complement to these capabilities—enabling developers to confidently deploy agents without sacrificing control or visibility.

Industry observers like Simon Willison have noted the emergence of collaborative coding paradigms where humans and AI agents work in tandem. Security infrastructure becomes essential in these hybrid workflows, where the AI agent might have access to sensitive repositories, databases, or infrastructure.

Why This Matters Now

The timing is significant. As organizations move from experimenting with AI coding tools to deploying them in production environments, security requirements shift from "nice to have" to mission-critical. A single compromised code generation event could introduce vulnerabilities across an entire codebase or infrastructure.

The Security Center positions Claude Code as a tool suitable for enterprise deployments where governance, auditability, and risk management are non-negotiable. This is particularly important as agentic coding becomes a mainstream practice rather than an experimental edge case.

What's Next

The Security Center represents one piece of Anthropic's broader strategy to make Claude Code the default choice for developers who need both capability and control. As the AI coding space matures, expect security features to become table stakes—and Anthropic is ensuring it leads rather than follows on this front.