ChatGPT's Grokipedia Citations Spark Bias and Misinformation Concerns

OpenAI's latest models are citing Elon Musk's Grokipedia as a source, raising critical questions about information bias and the reliability of AI-generated knowledge bases in mainstream LLM responses.

The Emerging Knowledge Source Problem

The competitive landscape of AI information systems just became significantly more complicated. ChatGPT's latest models are now citing Grokipedia, Elon Musk's AI-powered encyclopedia, as a source for factual information in user responses. This development raises fundamental questions about how major language models validate and prioritize information sources, and whether corporate-backed knowledge bases should hold equal weight with traditional reference materials.

The issue centers on a critical vulnerability in modern LLM architecture: the systems that power ChatGPT and similar tools increasingly pull from diverse data sources without transparent mechanisms to evaluate source credibility or detect potential bias. According to recent reporting, OpenAI's GPT-5.2 model is sourcing data from AI-generated content, with Grokipedia appearing in responses to more obscure queries.

Why This Matters: The Bias Amplification Risk

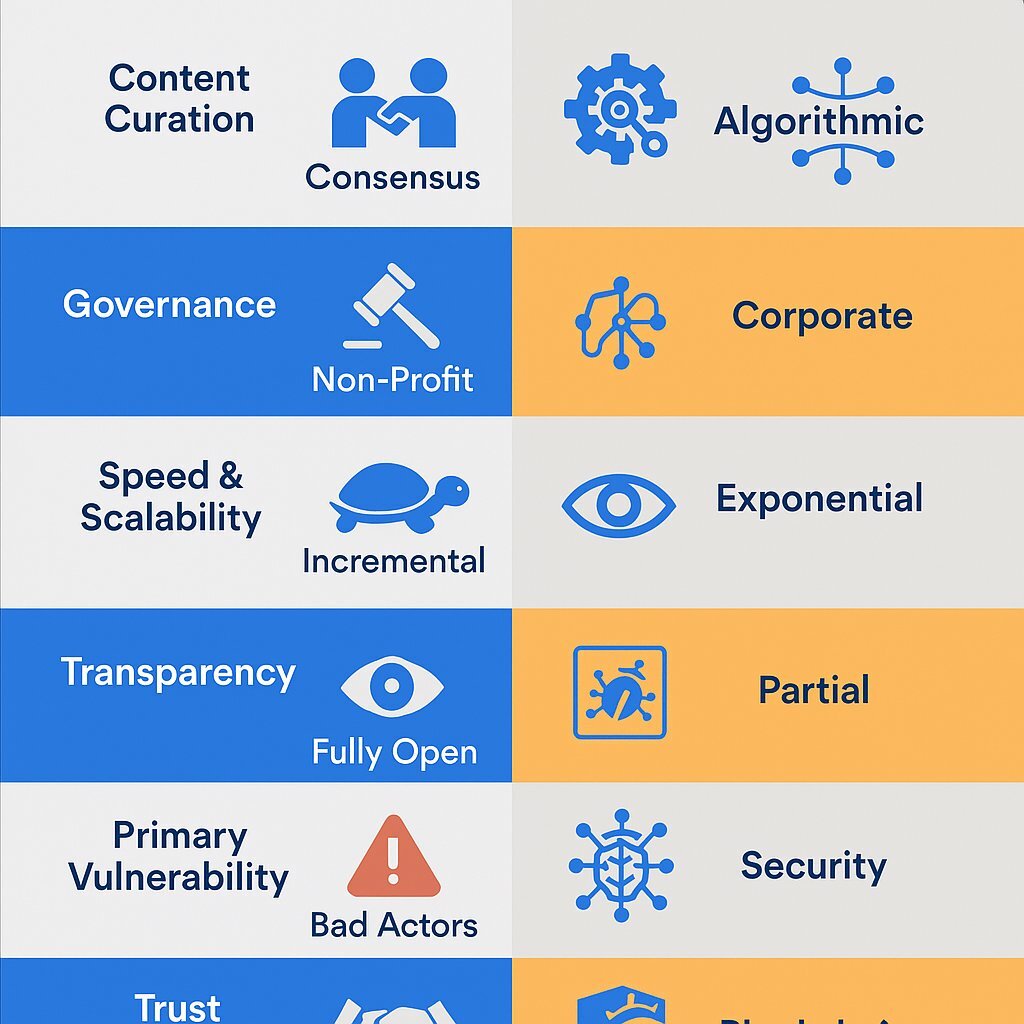

The concern isn't simply that ChatGPT cites Grokipedia—it's how and why. Grokipedia, created by Musk's xAI, represents a fundamentally different editorial philosophy than Wikipedia, with less transparent governance and community oversight. When an AI system trained on billions of documents begins citing a proprietary, corporate-controlled knowledge base, it creates several structural problems:

- Opacity in sourcing: Users cannot easily verify whether information came from peer-reviewed sources or AI-generated content

- Bias concentration: A single corporate entity gains influence over what millions of users perceive as factual

- Circular reinforcement: AI-generated content cited by other AI systems creates feedback loops that amplify errors

Research indicates that ChatGPT's reliance on Grokipedia raises significant misinformation concerns, particularly for niche topics where verification is difficult and user scrutiny is low.

Technical Architecture and Detection

The underlying mechanism appears to involve how modern LLMs handle information retrieval for specialized queries. When ChatGPT encounters questions outside its primary training data, it increasingly turns to supplementary sources—and Grokipedia's integration into accessible data feeds has made it a convenient option.

A technical analysis from Tom's Hardware reveals that popular LLMs use content from Grokipedia as a source for more obscure queries, suggesting this isn't accidental but rather a deliberate integration into retrieval systems.

The Broader Implications

This development highlights a critical gap in AI governance. Unlike Wikipedia, which operates under community-driven editorial standards and transparent conflict-of-interest policies, Grokipedia operates as a proprietary system. According to reporting from Engadget, OpenAI's GPT-5.2 model cites Grokipedia in ways that blur the line between independent verification and corporate promotion.

The question facing the AI industry is whether citation practices should be regulated, and whether LLMs should be required to disclose when they're drawing from corporate-controlled knowledge bases versus community-maintained references.

What Comes Next

As AI systems become primary information sources for millions of users, the integrity of their citation practices becomes a matter of public concern. OpenAI has not publicly addressed why Grokipedia citations appear in ChatGPT responses, nor has it outlined criteria for evaluating source reliability. This silence itself is telling—it suggests the integration may have occurred through data pipeline decisions rather than deliberate editorial policy.