Meta's TRIBE v2 AI Model Decodes Brain Activity in Real Time

Meta's new TRIBE v2 foundation model can predict how the human brain responds to images, sounds, and speech—opening new frontiers in neuroscience research and AI-brain interfaces.

The Brain-AI Convergence Just Got Real

The race to decode human cognition has entered a new phase. Meta's latest AI model, TRIBE v2, can now predict brain activity across multiple sensory modalities—a breakthrough that challenges the traditional boundaries between neuroscience and machine learning. While competitors explore narrow applications, Meta is building a unified foundation model that treats the brain itself as a predictable system.

This isn't theoretical neuroscience. The implications ripple across clinical diagnostics, brain-computer interfaces, and our fundamental understanding of perception itself.

What TRIBE v2 Actually Does

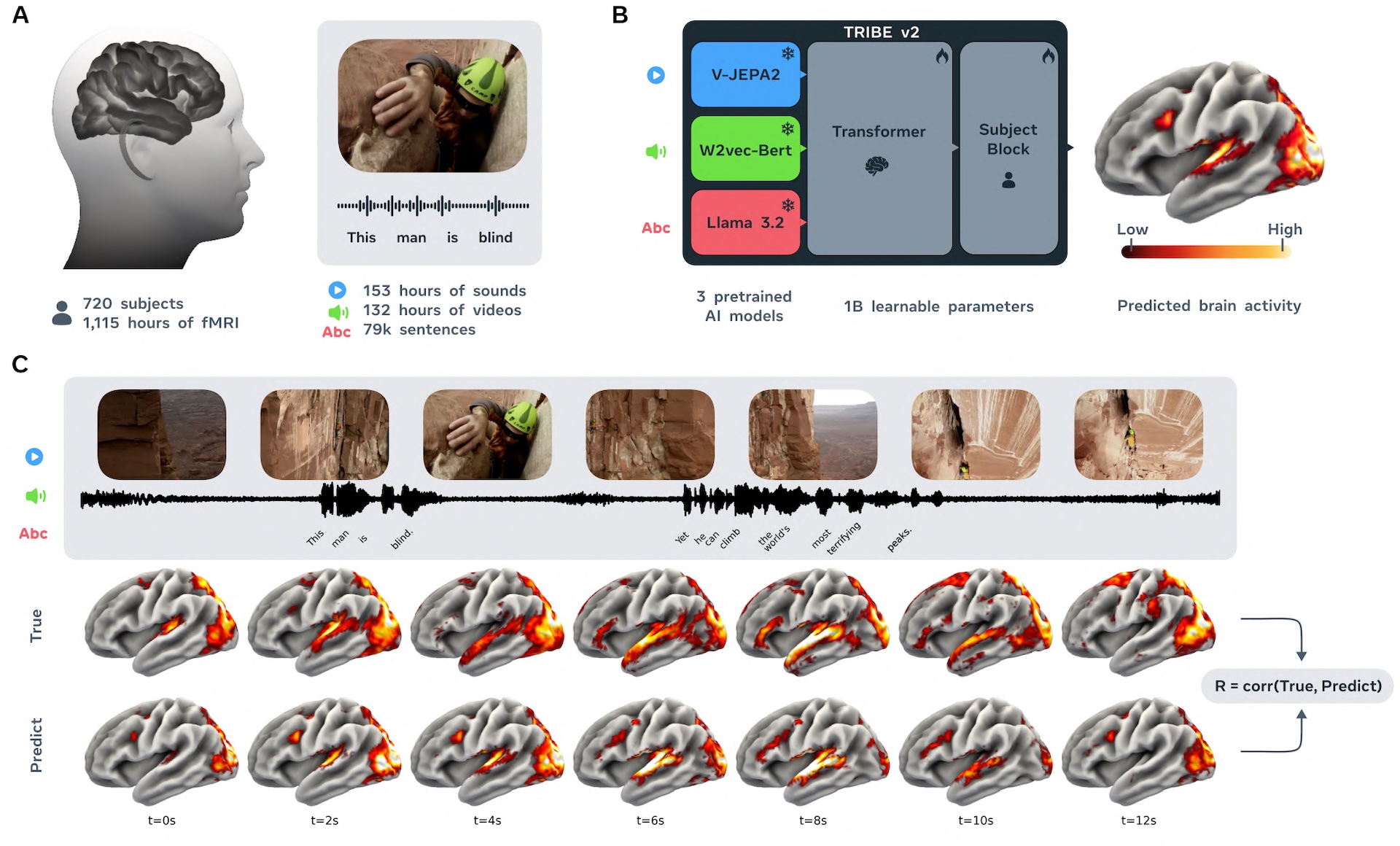

TRIBE v2 is a foundation model designed to predict neural responses to visual, auditory, and linguistic stimuli. Rather than focusing on a single brain region or sensory pathway, the model integrates multimodal inputs to forecast how distributed neural networks activate in response to real-world stimuli.

The technical architecture represents a significant departure from previous approaches:

- Multimodal integration: Processes images, sounds, and text simultaneously to predict coordinated brain responses

- Foundation model approach: Trained on large-scale neural datasets, enabling transfer learning across different brain regions and individuals

- Scalability: Designed to work across diverse populations, potentially reducing the need for extensive individual calibration

According to Meta's research publications, the model achieves predictive accuracy that exceeds previous single-modality approaches, suggesting that treating the brain as an integrated system—rather than isolated functional modules—yields better results.

Why This Matters Beyond the Lab

The practical applications extend far beyond academic neuroscience. TRIBE v2 could accelerate clinical research by providing a "digital twin" of brain activity, allowing researchers to test hypotheses computationally before conducting expensive and time-consuming human studies.

Key implications include:

- Faster drug development: Pharmaceutical companies could simulate neural responses to candidate compounds without extensive animal testing

- Brain-computer interfaces: More accurate decoding of intent from neural signals, improving prosthetic control and communication aids

- Personalized medicine: Understanding individual variation in neural responses to treatments

According to neuroscience researchers cited in coverage of the announcement, the foundation model approach represents a paradigm shift—moving away from hand-crafted features toward learned representations that capture the brain's actual computational logic.

The Competitive Landscape

Meta's move signals intensifying competition in the neurotechnology space. While academic labs have published brain-decoding models for years, Meta's integration of multimodal prediction into a scalable foundation model positions the company as a serious player in translating neuroscience research into deployable systems.

The company's approach differs from narrow-use-case models: rather than optimizing for a single prediction task, TRIBE v2 is built as a general-purpose tool for "in-silico neuroscience"—computational experiments that replace or augment physical research.

What's Still Unknown

The current release raises important questions:

- Generalization limits: How well does the model transfer to individuals with neurological conditions or atypical neural organization?

- Ethical guardrails: What safeguards exist to prevent misuse in surveillance or manipulation contexts?

- Data transparency: Which datasets trained the model, and what biases might they introduce?

Meta has not yet published comprehensive technical documentation addressing these concerns, though the company's research team has indicated that peer review and open publication are forthcoming.

The Broader Implication

TRIBE v2 represents a crucial moment: AI systems are moving from predicting human behavior to predicting human cognition. The distinction matters. If machines can reliably forecast neural responses, the next logical step—decoding intention, preference, or even belief—becomes technically feasible, raising profound questions about privacy, autonomy, and the nature of human agency.

For now, Meta is positioning TRIBE v2 as a research tool. Whether it remains confined to the lab, or becomes infrastructure for consumer applications, will define the next chapter of AI's relationship with neuroscience.