Microsoft Unveils Rho-alpha for Advanced Robotics

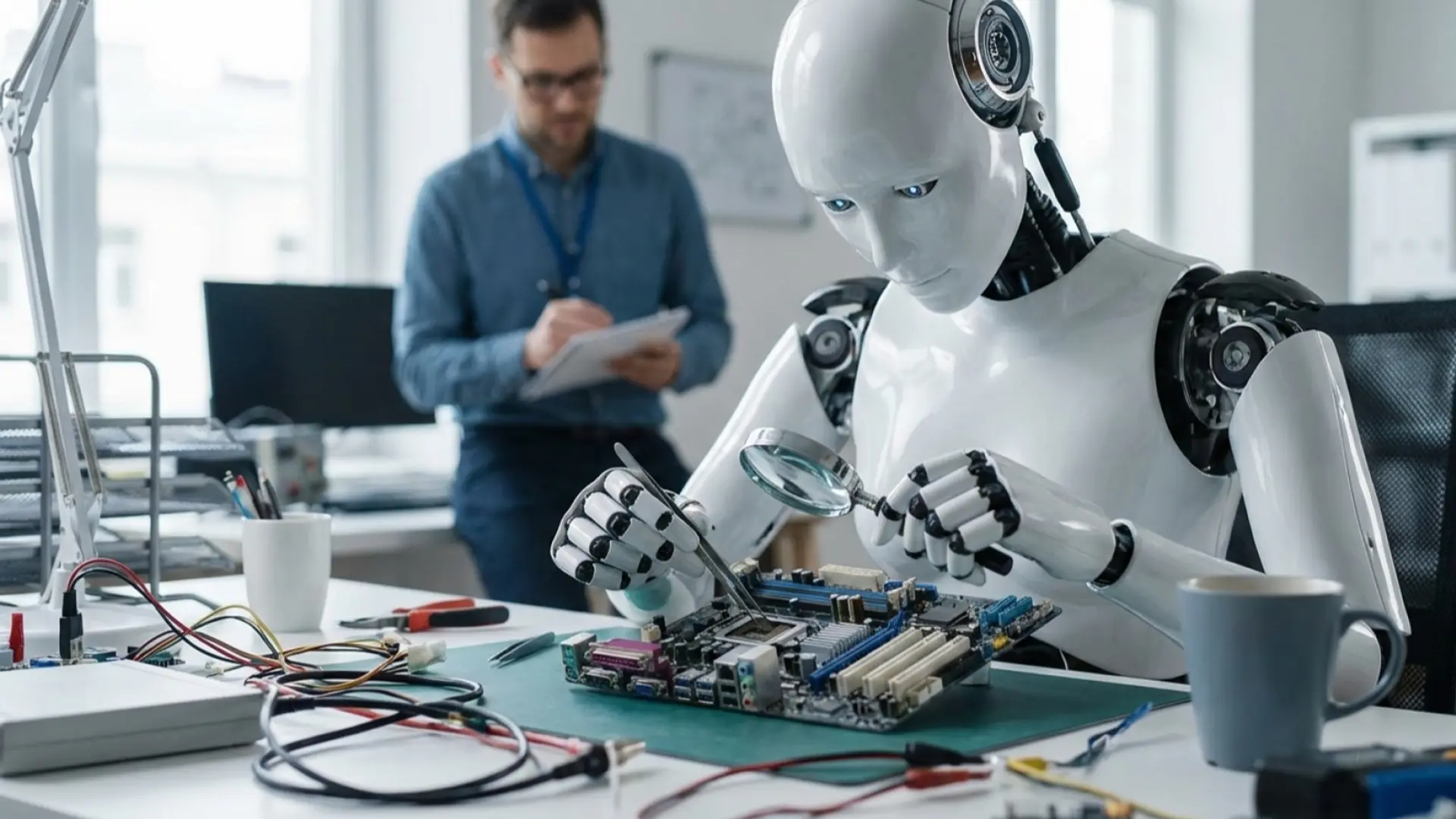

Microsoft unveils Rho-alpha, a robotics model integrating vision, language, and tactile sensing for complex tasks, marking a new era in robotics AI.

Microsoft Introduces Rho-alpha: A New Era for Robotics Foundation Models

Microsoft has unveiled Rho-alpha, its first robotics model derived from the Phi series of vision-language models, marking a significant step toward enabling robots to understand and execute natural-language instructions in complex, real-world environments. The model represents a departure from traditional robotics systems by combining vision, language, and tactile sensing to perform bimanual manipulation tasks—work requiring two-armed coordination and precise motor control.

What Is Rho-alpha and How Does It Work?

Rho-alpha functions as what Microsoft describes as a VLA+ (Vision-Language-Action Plus) model, extending beyond conventional vision-language-action approaches by incorporating tactile sensing and force feedback capabilities. The system translates natural-language commands into low-level control signals that drive robotic hardware, enabling dual-arm robots to perform manipulation tasks such as button pushing, knob turning, plug insertion, and tool handling.

The technical architecture represents a meaningful evolution in robotics AI. Unlike vision-only systems that rely exclusively on camera input, Rho-alpha processes multiple sensory modalities simultaneously. This multi-modal approach proves critical for tasks involving physical contact, where visual cues alone are insufficient to guide precise manipulation. The model achieves this tactile-aware behavior through co-training on trajectories derived from both physical robot demonstrations and simulated tasks, supplemented with web-scale visual question-and-answering data.

According to Microsoft Research, the model is currently under evaluation on dual-arm industrial robots and humanoid platforms, with the company planning to publish detailed technical specifications in the coming months. The system is designed to continually improve during deployment by learning from feedback provided by human operators—a capability that addresses one of robotics' persistent challenges: adaptation to dynamic, unstructured environments.

Strategic Context: Why Now?

The announcement arrives amid a broader industry shift toward moving robots beyond repetitive factory environments into the messy, dynamic spaces where humans actually live and work. This timing reflects several converging trends in AI and robotics development.

Foundation models have transformed adjacent domains. Generative models revolutionized language and vision processing over the past several years, and the robotics industry is now pursuing similar foundation-model approaches that can generalize across tasks rather than requiring task-specific programming. Microsoft's framing emphasizes that "Physical AI, where agentic AI meets physical systems, is poised to redefine robotics in the same way that generative models have transformed language and vision processing."

Training data scarcity remains a bottleneck. Microsoft has addressed a critical challenge in robotics development by combining physical demonstrations with synthetic simulation data generated through NVIDIA Isaac Sim on Azure. This hybrid approach circumvents the practical impossibility of collecting sufficient real-world training data for diverse manipulation tasks—teleoperation, while standard practice, remains impractical or impossible in many settings.

Trust and adaptability matter for deployment. As robotics moves from controlled factory floors into human-shared spaces, the ability to adapt to dynamic situations and human preferences becomes essential for user acceptance. Microsoft explicitly stated: "We believe robots that can adapt more easily to dynamic situations and to human preferences will be more useful in the environments in which we live and work and more trusted by the people who deploy and operate them."

Broader Industry Context and Competition

The robotics foundation-model space remains nascent but increasingly competitive. While specific competitor comparisons are limited in available sources, the industry consensus suggests that foundation-style robotics models capable of generalization represent the next frontier, moving beyond single-task automation toward adaptive systems.

Microsoft's approach leverages its existing strength in large language models through the Phi series, a family of compact vision-language models designed for efficiency. This strategy allows the company to apply proven techniques from language and vision AI to the physical world—a playbook that competitors like Tesla (with its humanoid robot Optimus), Boston Dynamics, and others are also pursuing through different architectures.

The emphasis on tactile sensing and multi-modal perception distinguishes Rho-alpha from some competitors that rely primarily on vision-based approaches. This design choice reflects lessons learned across robotics research: contact-heavy tasks demand richer sensory input than cameras alone can provide.

Deployment and Access

Rho-alpha remains in active research phase, with Microsoft planning to provide access through an early access program followed by availability via Microsoft Foundry, the company's AI infrastructure service. This staged rollout approach mirrors Microsoft's typical strategy for emerging research technologies, allowing partner organizations to test and refine the model before broader commercial deployment.

The reliance on synthetic training data and simulation infrastructure positions Microsoft's cloud services—particularly Azure and its integration with NVIDIA tools—as central to the model's continued development and scaling. This infrastructure advantage could prove significant for organizations lacking proprietary robotics training data or simulation capabilities.

Technical Challenges Ahead

Despite these advances, substantial engineering work remains. Microsoft has explicitly stated that the team is "working toward end-to-end optimizations of Rho-alpha's training pipeline and training data corpus for performance and efficiency on bimanual manipulation tasks." The company has not yet published peer-reviewed technical descriptions, though it has committed to doing so in the coming months.

The challenge of scaling from research prototypes to production-grade systems persists. While Rho-alpha shows promise in controlled testing environments, real-world deployment in unstructured human spaces introduces variables—variable lighting, unexpected objects, human presence—that no training dataset fully captures. The model's ability to learn from human feedback during deployment represents an attempt to address this gap, but the practical effectiveness of this approach remains to be demonstrated.

Implications for the Robotics Industry

Rho-alpha signals Microsoft's serious commitment to positioning itself as a foundational infrastructure provider for physical AI, not merely a software company observing robotics from the sidelines. By anchoring the model to its existing Phi vision-language-model family and Azure infrastructure, Microsoft creates potential lock-in advantages while reducing barriers to adoption for enterprises already embedded in its ecosystem.

The announcement also reinforces that the robotics industry is converging on foundation models as the primary research and development path forward—a shift that favors companies with existing expertise in large-scale model training, massive computational resources, and established relationships with enterprise customers.