MiniMax M2.5 Challenges Claude Opus with Open-Weights Alternative

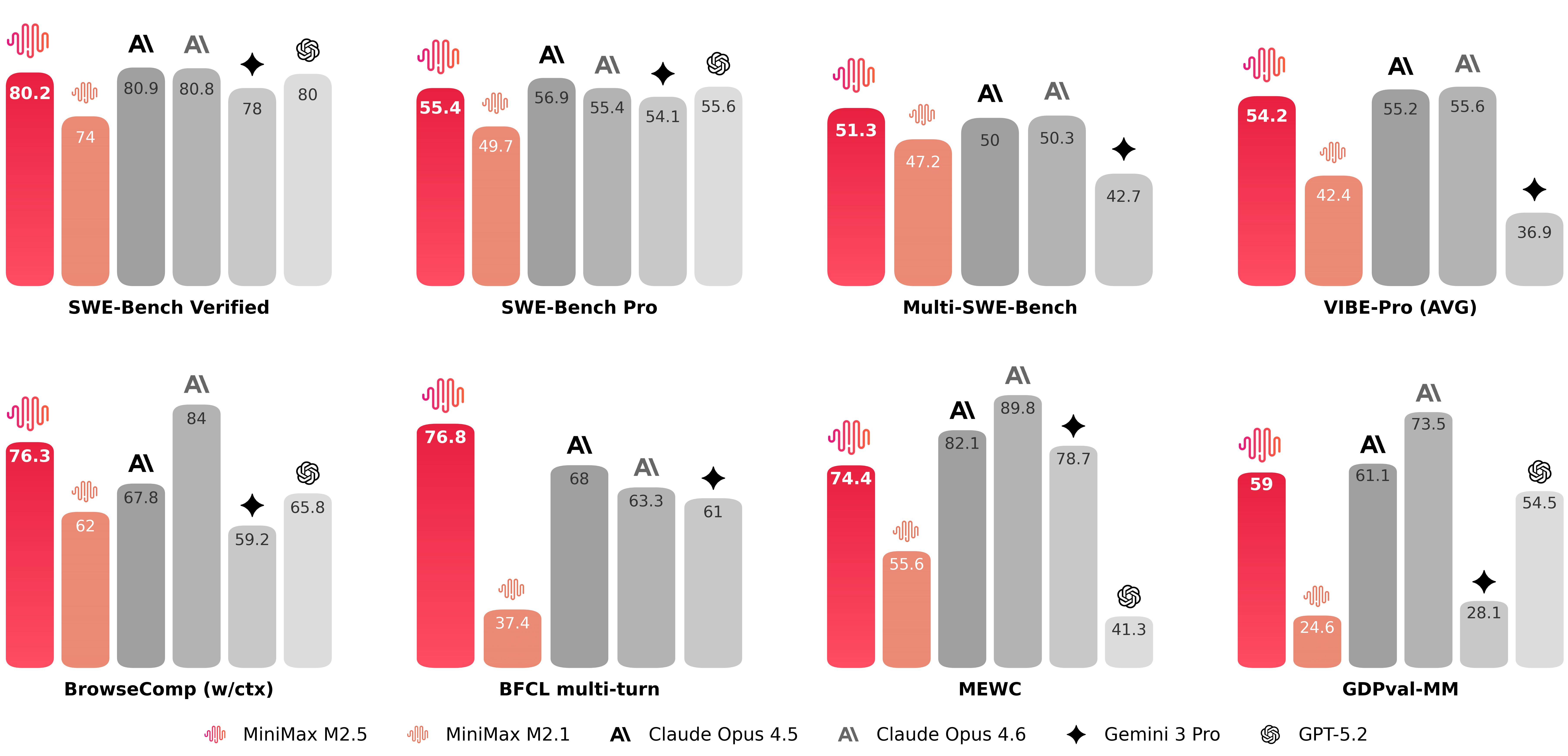

MiniMax's new M2.5 model claims competitive performance with Claude Opus 4.6, offering an open-weights alternative designed for real-world productivity at a lower cost.

The Open-Weights Challenge to Anthropic's Dominance

The race for large language model supremacy just got more crowded. MiniMax has released its M2.5 model, directly positioning it as a competitor to Anthropic's Claude Opus 4.6. What makes this launch significant isn't just another capability claim—it's the strategic positioning of an open-weights alternative in a market increasingly dominated by proprietary, closed-source systems.

The timing matters. As enterprises grapple with vendor lock-in concerns and rising API costs, MiniMax's approach targets real-world productivity use cases rather than chasing benchmark supremacy alone. This reflects a broader industry shift: companies are beginning to question whether the most expensive models are always the most practical.

Performance Claims and Competitive Positioning

According to company claims, M2.5 delivers performance parity with Claude Opus 4.6 across key benchmarks while maintaining lower computational requirements. The model is available through multiple platforms, including integration points for developers seeking alternatives to established providers.

The open-weights aspect is crucial. Unlike Claude, which remains proprietary, M2.5's architecture allows organizations to:

- Deploy models on-premises without API dependencies

- Fine-tune for domain-specific applications

- Avoid recurring licensing costs at scale

- Maintain data sovereignty for sensitive workloads

Industry observers note that open-weights models are beginning to catch up to Claude's capabilities, suggesting the competitive landscape is fragmenting beyond the traditional closed-source incumbents.

Market Context: Cost vs. Capability

The M2.5 launch arrives amid growing scrutiny of AI model pricing. Enterprise customers increasingly question whether Claude Opus justifies its premium positioning when alternatives offer comparable performance at lower operational costs. MiniMax's model is explicitly designed for productivity workflows, targeting use cases where cost-per-inference matters as much as raw capability.

This isn't a race to the bottom on price—it's a reframing of value. MiniMax is arguing that for many real-world applications, the marginal performance gains of the most expensive models don't justify their cost structure.

What This Means for the Industry

The M2.5 release signals that the LLM market is maturing beyond hype cycles. Enterprises now have legitimate alternatives to evaluate, and the burden of proof shifts to market leaders like Anthropic to justify premium positioning. Open-weights models are no longer experimental—they're becoming production-grade options.

For developers and organizations, this creates practical choices: proprietary convenience with higher costs, or open-weights flexibility with lower operational overhead. Neither approach is universally superior; the decision depends on specific requirements around latency, customization, and budget constraints.

The real story isn't whether M2.5 is objectively "better" than Claude Opus. It's that the market is finally developing genuine competition in the frontier model space, forcing all players to justify their positioning beyond marketing claims.