MiniMax M2.5: The Open Source Coding Model Reshaping AI Development

MiniMax M2.5 emerges as a breakthrough in open-source AI, delivering state-of-the-art coding capabilities that rival proprietary models. Here's why developers are taking notice.

The Open Source Coding Revolution Just Got Real

The competitive landscape for AI coding models just shifted dramatically. While proprietary giants dominate headlines, MiniMax M2.5 is challenging the status quo as a genuinely competitive open-source alternative that achieves state-of-the-art performance on coding benchmarks. This isn't incremental progress—it's a watershed moment for developers seeking freedom from vendor lock-in.

What Makes M2.5 a Breakthrough

MiniMax M2.5 distinguishes itself through a combination of architectural innovations and practical engineering:

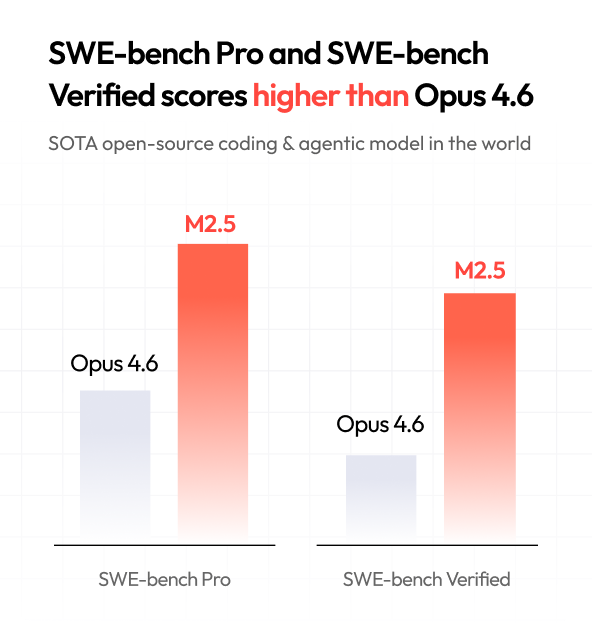

Performance on Benchmarks: According to recent analysis, M2.5 achieves top-tier results on SWE-bench Verified, a rigorous evaluation framework for software engineering tasks. This metric matters because it measures real-world coding ability—not just pattern matching on toy problems.

Speed and Efficiency: The model balances capability with computational efficiency, making it deployable on modest hardware compared to larger proprietary alternatives. This democratizes access to advanced coding assistance for teams without unlimited infrastructure budgets.

Open Weights: Unlike many "open" models that remain proprietary at inference time, M2.5's open-weight design allows researchers and developers to fine-tune, audit, and customize the model for specific use cases.

The Broader Context: Chinese AI Innovation

M2.5 arrives amid a broader wave of Chinese AI innovation challenging Western dominance. The competition is intensifying, with models like GLM-5 and others demonstrating that innovation isn't geographically concentrated. This competitive pressure is beneficial for the entire ecosystem—it forces rapid iteration and prevents complacency.

Why This Matters for Developers

Independence: Teams can now build production systems on open-source coding models without relying on API quotas or vendor pricing decisions.

Transparency: Open weights enable security audits and bias detection that proprietary models obscure behind corporate walls.

Customization: Fine-tuning capabilities mean organizations can adapt M2.5 to domain-specific coding patterns—whether that's legacy system maintenance or emerging frameworks.

The Reality Check

While M2.5 represents genuine progress, the broader AI landscape continues evolving rapidly. Proprietary models still hold advantages in certain domains, and the gap narrows incrementally rather than disappearing overnight. The real victory here is choice—developers now have a credible open-source option that doesn't require compromise.

What's Next

The emergence of competitive open-source coding models suggests a bifurcating market: proprietary solutions optimized for maximum capability, and open alternatives optimized for control and customization. Organizations will increasingly choose based on their specific constraints rather than defaulting to closed systems.

For developers evaluating coding AI tools, M2.5 deserves serious consideration. It's not about choosing open-source for ideological reasons anymore—it's about choosing the right tool for the job. In this case, that tool happens to be open.