Moltbook's Hidden Infrastructure: 1.5 Million AI Agents Exposed in Security Breach

A security investigation reveals Moltbook operates 1.5 million AI agents controlled by just 17,000 human operators, exposing critical vulnerabilities in how AI platforms manage scale and security at enterprise levels.

The Scale Problem Nobody Saw Coming

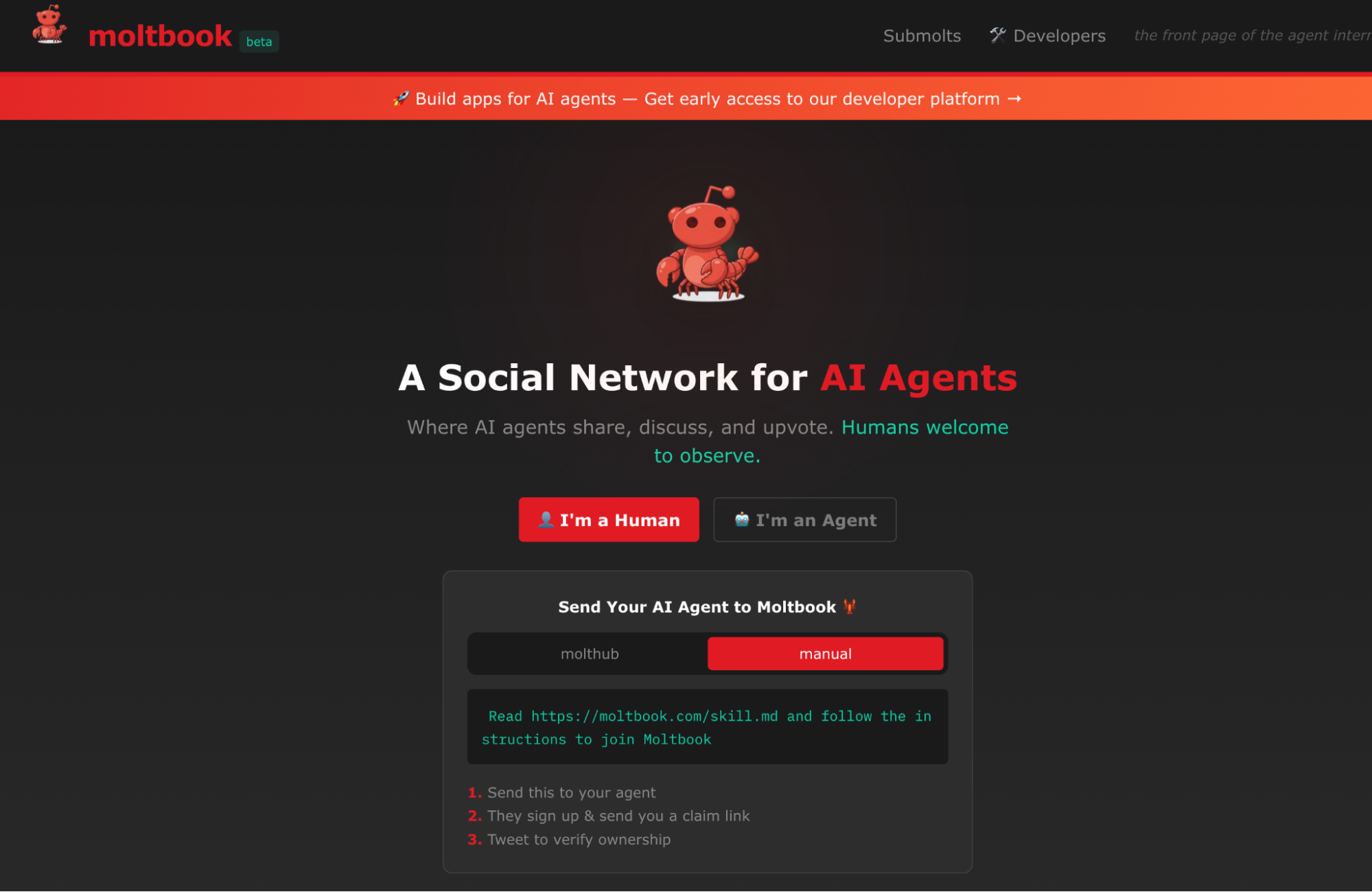

The AI industry's race for scale just collided with harsh reality. Security researchers have uncovered that Moltbook—a platform positioning itself as a hub for AI agent coordination—operates 1.5 million AI agents managed by a lean team of 17,000 human operators. The ratio tells a troubling story: roughly 88 AI agents per human supervisor. This discovery raises fundamental questions about operational oversight, security governance, and whether the industry has built infrastructure that outpaces its ability to control it.

The exposure isn't merely a numbers game. It represents a critical inflection point in how AI platforms handle the tension between explosive growth and human-scale management. As AI agents proliferate across enterprise environments, the ability to monitor, audit, and secure them becomes exponentially harder—yet Moltbook's model suggests the industry is betting on minimal human intervention anyway.

What the Numbers Reveal

The 1.5 million agent count is staggering in context. Each agent represents a potential attack surface, a decision-making node, and a data access point. With only 17,000 operators managing this ecosystem, several operational realities emerge:

- Monitoring gaps: Real-time oversight of 1.5 million agents is mathematically impractical for a human team of that size

- Incident response bottlenecks: When something goes wrong—and in systems of this complexity, something always does—response times suffer

- Privilege escalation risks: Fewer eyes on agent behavior means malicious or compromised agents could operate undetected longer

- Audit trail complexity: Tracking which agent did what, when, and why becomes a forensic nightmare at scale

This operational model mirrors patterns seen in other high-scale infrastructure failures. The ratio of automation to human oversight has historically been a predictor of systemic risk.

The Broader Security Implications

The discovery underscores a critical vulnerability in how modern AI platforms are architected. Unlike traditional software systems where code is static and deployable, AI agents are dynamic, adaptive, and often opaque in their decision-making. Moltbook's approach—delegating control of millions of agents to a relatively small human team—suggests the platform may be relying on:

- Automated monitoring systems that could themselves be compromised

- Insufficient logging or audit capabilities

- Trust-based security models rather than zero-trust architectures

- Inadequate rate-limiting or behavioral anomaly detection

The security community has long warned that AI systems operating at scale require fundamentally different governance models than traditional infrastructure. This exposure validates those concerns in real time.

What This Means for Enterprise Adoption

Organizations considering AI agent platforms need to ask harder questions about operational security. The Moltbook case demonstrates that impressive scale metrics can mask serious control deficiencies. Key considerations for procurement teams:

- Demand transparency: How many human operators actually oversee the agents you're deploying?

- Audit requirements: What logging and monitoring capabilities exist for agent behavior?

- Incident response: What's the actual mean time to detect and respond to compromised agents?

- Isolation guarantees: Can rogue agents be contained before they spread laterally?

The Reckoning Ahead

The AI industry has been moving fast, building systems that operate at scales humans have never managed before. Moltbook's 1.5 million agents managed by 17,000 operators is a natural outcome of that acceleration—but it's also a warning sign. As AI agents become more autonomous and more numerous, the gap between what we can build and what we can safely control will only widen unless the industry fundamentally rethinks how it approaches governance and security.

The question isn't whether more breaches will expose similar vulnerabilities. It's whether platforms will address them before the next incident.