Nvidia's Groq Chips Challenge China's AI Inference Market

Nvidia is expanding its AI chip portfolio with Groq LPU technology targeting China, signaling a strategic shift in inference computing as competition intensifies in the region's booming AI sector.

The Inference Wars Heat Up in China

The battle for AI dominance in China just shifted gears. While competitors scramble to capture the inference market, Nvidia is readying Groq AI chips specifically for the Chinese market, marking a critical strategic move in a region where AI adoption is accelerating faster than anywhere else globally. This isn't just another product launch—it's a calculated response to changing market dynamics and emerging competition in one of the world's most valuable AI ecosystems.

Understanding Groq: Beyond Traditional GPUs

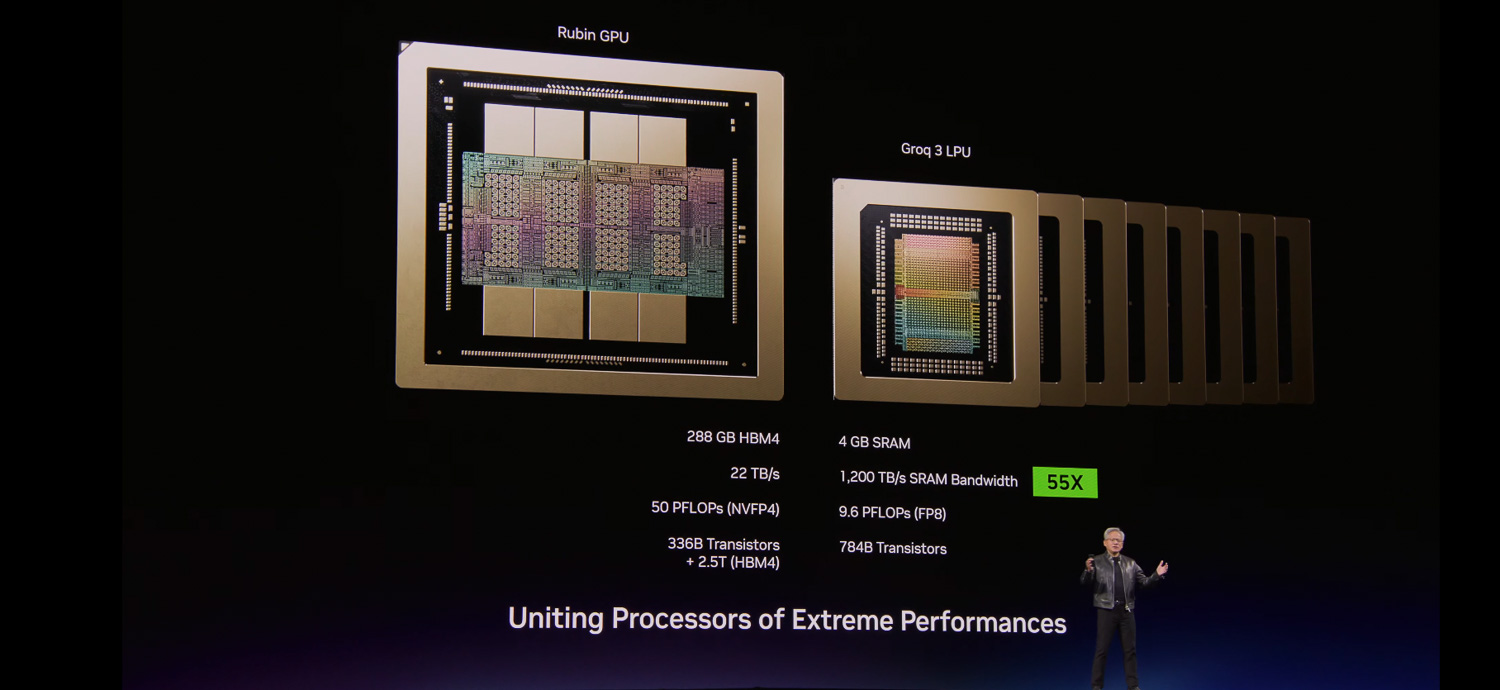

Nvidia's Groq technology represents a fundamental departure from its traditional GPU-centric approach. Unlike GPUs optimized for training massive language models, Groq LPUs (Language Processing Units) are purpose-built for inference—the computationally intensive process of running trained AI models at scale.

Key technical distinctions:

- Specialized architecture: Groq chips eliminate memory bottlenecks that plague GPU-based inference

- Throughput optimization: LPUs deliver faster token generation for real-time AI applications

- Cost efficiency: Lower power consumption compared to traditional GPU inference clusters

- Latency reduction: Critical for applications requiring sub-millisecond response times

According to recent analysis, Nvidia's inference bet poses both challenge and opportunity in China's competitive landscape, where local players and international competitors are rapidly scaling AI services.

Why China Matters for Inference

China's AI market differs fundamentally from Western markets. The region's emphasis on large-scale deployment of AI services—from e-commerce recommendations to financial modeling—creates enormous demand for efficient inference infrastructure. Companies operating in China face unique constraints:

- Regulatory requirements limiting reliance on foreign cloud infrastructure

- Cost pressures from intense competition among AI service providers

- Scale demands driven by billions of potential users

- Latency sensitivity for real-time applications across mobile and edge devices

By targeting China specifically, Nvidia acknowledges that inference workloads in the region require different optimization priorities than training-focused markets.

Strategic Implications

This move signals several important shifts in Nvidia's corporate strategy:

Market segmentation: Rather than a one-size-fits-all approach, Nvidia is tailoring chip architectures to regional market needs. The Groq launch in China demonstrates recognition that inference economics differ significantly from training.

Competition response: Chinese AI companies and international competitors have been investing heavily in inference optimization. Groq represents Nvidia's answer to these emerging threats in a market where margins matter intensely.

Supply chain diversification: Samsung's involvement in manufacturing Groq chips suggests Nvidia is building redundancy in production capacity, critical for meeting China's massive demand.

The Inference Opportunity

The inference market represents a multi-hundred-billion-dollar opportunity that's been largely overshadowed by training hype. As AI models mature and deployment accelerates, inference becomes the dominant cost driver. Companies running ChatGPT-like services spend far more on inference than on training.

China's position as a manufacturing and deployment hub for AI services makes it the logical first market for specialized inference chips. Success here could establish Groq as the standard for inference workloads globally.

What's Next

The competitive landscape will intensify as Groq enters the Chinese market. Local competitors, cloud providers, and international chipmakers will respond with their own inference solutions. The real test will be whether Groq's technical advantages translate into market adoption among Chinese enterprises and cloud providers operating under unique regulatory and economic constraints.

For Nvidia, this represents a calculated bet that inference—not training—will define the next phase of AI infrastructure competition.