Nvidia's OpenClaw Guide: Running AI Agents Locally on RTX GPUs

Nvidia has released a comprehensive guide for deploying OpenClaw AI agents locally, enabling developers to harness autonomous AI capabilities on consumer and enterprise GPUs without cloud dependencies.

The Race for Local AI Agent Dominance

The competitive landscape for AI agent frameworks just shifted. While cloud-based AI solutions dominate enterprise deployments, Nvidia has published a detailed guide that empowers developers to run OpenClaw AI agents directly on local hardware—from RTX consumer GPUs to enterprise DGX systems. This move democratizes access to sophisticated autonomous agents, challenging the cloud-first narrative that has defined AI infrastructure for the past two years.

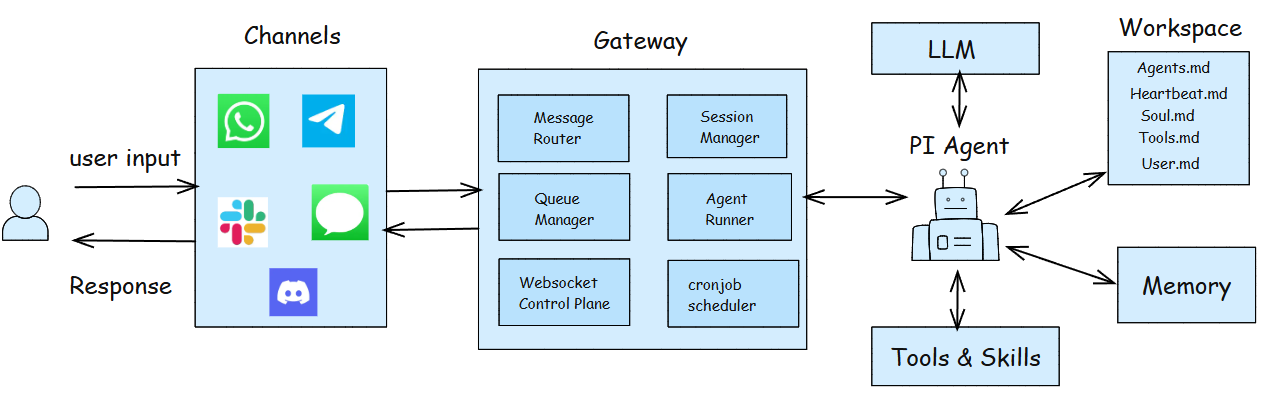

OpenClaw represents a significant evolution in open-source AI tooling. Unlike traditional chatbots or language models, OpenClaw functions as a true autonomous agent capable of reasoning, planning, and executing complex tasks across multiple domains. The framework's architecture enables agents to interact with APIs, databases, and external systems with minimal human intervention—a capability that carries both tremendous potential and legitimate security concerns.

What Nvidia's Guide Covers

Nvidia's technical documentation provides a structured pathway for developers to deploy OpenClaw in local environments. The guide addresses several critical implementation areas:

- Hardware Requirements: Specifications for RTX GPUs, memory allocation, and optimization strategies

- Installation & Configuration: Step-by-step setup procedures for different operating systems

- Performance Tuning: Best practices for maximizing inference speed and reducing latency

- Integration Patterns: Methods for connecting OpenClaw agents to existing applications and workflows

According to the dev.to technical breakdown, the guide emphasizes practical implementation over theoretical concepts, making it accessible to developers with varying levels of AI expertise. The documentation includes code examples, configuration templates, and troubleshooting sections designed to accelerate deployment timelines.

Why Local Deployment Matters

Running AI agents locally offers distinct advantages over cloud-based alternatives:

Cost Efficiency: Eliminates per-inference API charges and reduces bandwidth costs for organizations processing high volumes of agent requests.

Data Privacy: Keeps sensitive information within organizational boundaries, addressing compliance requirements in regulated industries.

Latency Reduction: Local execution removes network round-trip delays, enabling real-time agent decision-making for time-sensitive applications.

Customization: Developers gain full control over model parameters, system prompts, and agent behavior without vendor constraints.

However, security teams must carefully evaluate OpenClaw deployments, as autonomous agents with system access introduce new attack surfaces. The framework's ability to execute code and interact with external systems requires robust access controls and monitoring.

Deployment Scenarios

Atlantic.net's deployment guide and Jitendra Zaa's technical analysis demonstrate multiple implementation patterns:

- Development Environments: Local testing and experimentation on individual developer machines

- Hybrid Architectures: Local agents handling sensitive operations while cloud services manage non-critical tasks

- Enterprise Deployments: On-premises infrastructure for organizations requiring complete data sovereignty

Technical Considerations

The guide emphasizes that successful OpenClaw deployments require attention to model selection, prompt engineering, and tool integration. Developers must carefully define agent capabilities, establish clear boundaries for autonomous actions, and implement comprehensive logging for audit trails.

Video tutorials accompanying the official documentation provide visual walkthroughs of the setup process, reducing implementation friction for teams new to autonomous agent frameworks.

Looking Forward

Nvidia's investment in local AI agent tooling reflects broader industry recognition that not all AI workloads belong in the cloud. As organizations seek greater control over their AI infrastructure, guides like this one become essential resources for technical teams navigating the transition from centralized cloud services to distributed, locally-deployed intelligence.

The OpenClaw ecosystem continues evolving, with community contributions expanding agent capabilities and integration options. Developers interested in autonomous AI should review Nvidia's guide as a foundational resource for understanding both the potential and practical requirements of local agent deployment.