OpenAI Explores Chip Alternatives as Nvidia Performance Concerns Mount

OpenAI is evaluating non-Nvidia chip options amid growing concerns about processing speed limitations in current GPU technology, signaling potential shifts in AI infrastructure strategy.

The Nvidia Monopoly Under Pressure

The AI infrastructure landscape is shifting. OpenAI, the company that helped spark the current AI boom, is now actively investigating alternatives to Nvidia's dominant GPU lineup—a move that underscores mounting frustration with the speed constraints of current-generation chips. This isn't merely a procurement decision; it represents a critical inflection point in how leading AI labs approach computational bottlenecks that could determine the pace of AI advancement itself.

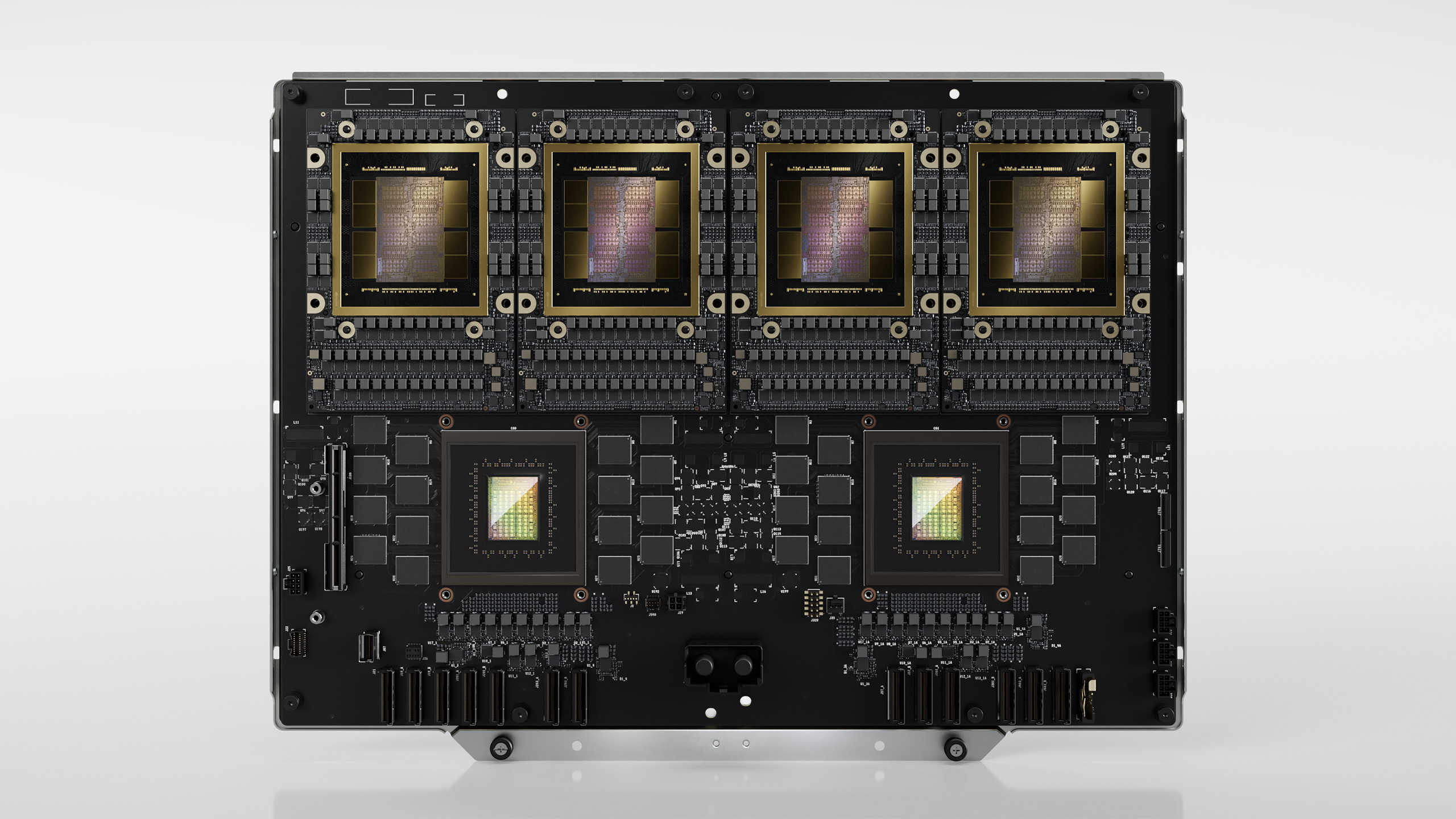

For years, Nvidia has enjoyed near-total dominance in the AI chip market, with companies like OpenAI, Google, and Meta building their entire infrastructure around GPUs like the H100 and newer Blackwell architectures. But as models grow larger and training requirements become more demanding, the limitations of even cutting-edge Nvidia silicon are becoming apparent. According to recent technical discussions, OpenAI's engineering teams have begun serious evaluations of alternative architectures that could deliver faster throughput and more efficient scaling.

What's Driving the Search?

The core issue centers on speed and latency. While Nvidia's GPUs excel at parallel processing, they face inherent bottlenecks when handling certain types of AI workloads—particularly those involving sequential reasoning, long-context processing, and real-time inference at scale. Technical analysis from industry observers suggests that these limitations become increasingly pronounced as model sizes approach and exceed 100 billion parameters.

OpenAI's investigation reportedly includes:

- Custom silicon development – Exploring in-house chip design to optimize for specific workload patterns

- Alternative GPU architectures – Evaluating AMD's MI300 series and other non-Nvidia options

- Heterogeneous computing – Combining different processor types (CPUs, GPUs, TPUs, specialized accelerators) for hybrid workloads

- Emerging startups – Assessing next-generation chip makers focused on AI-specific optimization

According to technical deep-dives, the performance gap between Nvidia's current offerings and theoretical optimal performance for certain AI tasks has widened to the point where alternative solutions warrant serious investment.

The Competitive Dimension

This move also reflects broader competitive dynamics. Industry observers note that Google's development of TPUs and Meta's investment in custom silicon have demonstrated that vertically integrated chip strategies can yield significant advantages. OpenAI's exploration suggests the company may be reconsidering its reliance on third-party hardware vendors.

Nvidia's response has been to accelerate its own roadmap, with the Blackwell architecture representing a substantial leap forward. However, technical assessments indicate that even these advances may not fully address the specific bottlenecks OpenAI has identified.

What This Means for the Industry

If OpenAI successfully develops or adopts non-Nvidia alternatives, the implications would be seismic:

- Supply chain diversification – Reduced dependency on a single vendor could stabilize GPU availability and pricing

- Innovation acceleration – Competition in chip design could drive faster performance improvements across the board

- Cost structure changes – Custom silicon could reduce per-unit computational costs, affecting AI service pricing

- Geopolitical implications – Reduced reliance on Nvidia could reshape export control dynamics around AI chips

Broader industry commentary suggests this isn't an isolated incident but rather the beginning of a larger trend toward chip diversification among major AI labs.

The Path Forward

OpenAI's chip exploration is still in early stages, and technical discussions suggest no immediate shift away from Nvidia infrastructure. However, the company's willingness to invest resources in alternatives signals that the era of unchallenged GPU dominance may be ending.

The real question isn't whether Nvidia will lose its market position entirely—the company's engineering talent and ecosystem advantages remain formidable. Rather, the question is whether the AI industry can support multiple competitive chip architectures, each optimized for different workload profiles. Industry analysts suggest that outcome would ultimately benefit the entire sector by spurring innovation and reducing bottlenecks that currently constrain AI development.