OpenAI Unleashes Cerebras-Powered Codex Spark, Delivering 15x Speed Boost

OpenAI's new Codex Spark model runs on Cerebras chips, achieving dramatic performance gains over previous versions. The partnership marks a shift toward specialized hardware for AI inference and code generation.

The Hardware Wars Just Got Serious

The race for AI dominance is no longer just about model size—it's about the silicon underneath. OpenAI has partnered with Cerebras to deploy its latest code-generation model, and the results challenge conventional wisdom about how fast AI inference can actually run. This isn't a minor optimization; it's a fundamental rethinking of the infrastructure required to power production AI workloads.

What Is Codex Spark?

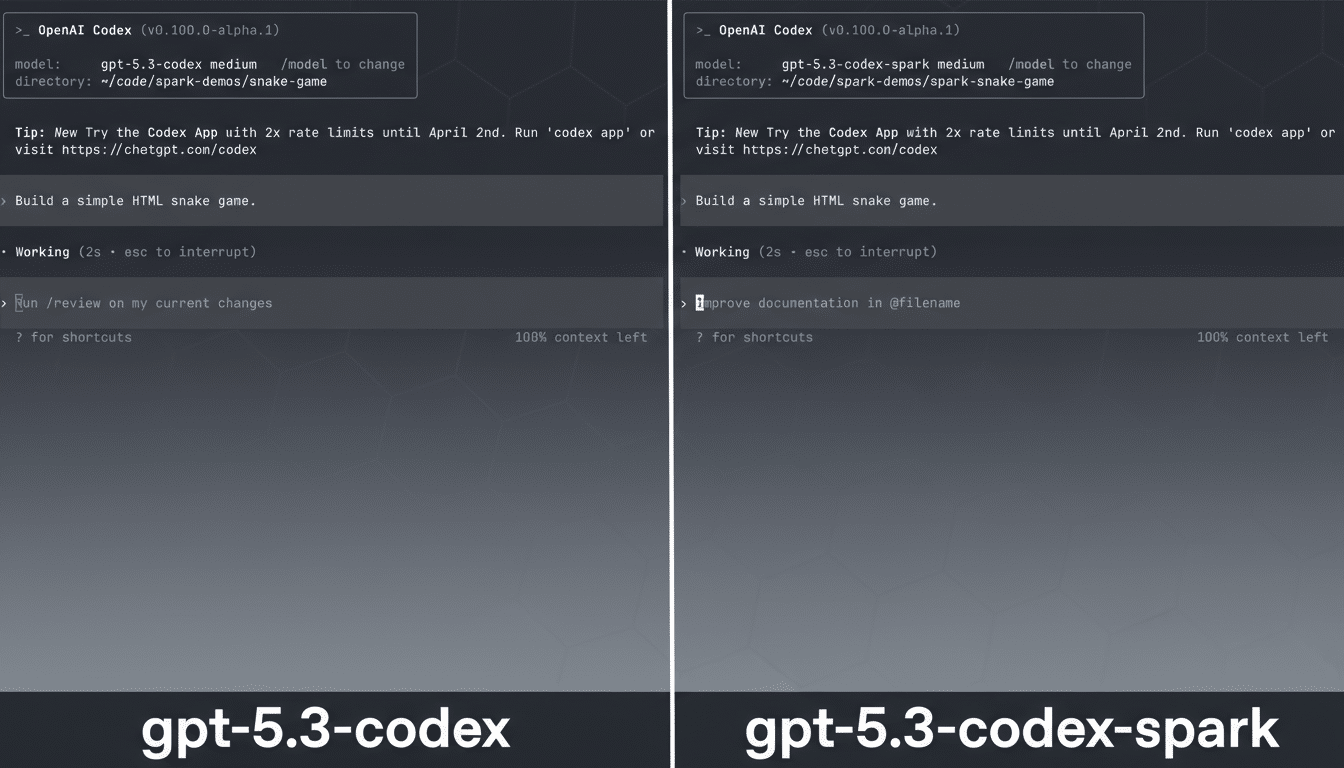

OpenAI's new Codex Spark model represents a specialized variant of the company's code-generation capabilities, specifically engineered to run on Cerebras' custom silicon. Unlike GPT-5.3 Codex, which relies on traditional GPU infrastructure, Spark leverages Cerebras' wafer-scale architecture—a radically different approach to chip design that packs far more processing power into a single unit.

The performance differential is striking: according to available benchmarks, Codex Spark executes code generation tasks 15 times faster than GPT-5.3 Codex. For developers and enterprises relying on AI-assisted coding, this speed advantage translates directly into reduced latency, lower operational costs, and faster iteration cycles.

Why Cerebras?

Cerebras' wafer-scale approach differs fundamentally from traditional chip design. Rather than connecting multiple smaller chips, Cerebras manufactures entire wafers as single processors, eliminating the communication bottlenecks that plague distributed GPU systems. The partnership signals OpenAI's confidence in this alternative architecture for production workloads, particularly those demanding consistent, predictable performance.

This move reflects a broader industry trend: as AI models mature, the bottleneck shifts from training to inference. Generic hardware becomes less optimal. Specialized silicon—whether from Cerebras, Groq, or other emerging players—offers tangible advantages for specific use cases.

Market Implications

The Cerebras partnership carries strategic weight beyond raw performance metrics:

- Vendor Diversification: OpenAI is reducing reliance on NVIDIA's GPU ecosystem, a significant statement in the AI infrastructure market

- Inference Economics: Faster inference on specialized hardware could reshape pricing models for API-based AI services

- Competitive Pressure: Other AI labs are likely watching closely, as Cerebras gains credibility as a production-grade alternative to traditional accelerators

- Developer Experience: Reduced latency improves the user experience for code completion and real-time AI assistance

Technical Considerations

The deployment isn't without trade-offs. Cerebras' wafer-scale architecture excels at specific workload patterns—particularly those with high parallelism and dense computation. Code generation, which benefits from rapid token generation and consistent throughput, aligns well with these strengths.

However, questions remain about scalability across diverse workloads and the long-term economics of manufacturing wafer-scale chips at scale. The Register's analysis notes that while performance gains are undeniable, the infrastructure costs and operational complexity warrant scrutiny from enterprises considering adoption.

What's Next?

This partnership likely represents the beginning of a broader trend toward hardware-software co-optimization in AI. As models stabilize and inference becomes the primary cost driver, expect more specialized deployments tailored to specific use cases—not just code generation, but retrieval-augmented generation, real-time translation, and other latency-sensitive applications.

For OpenAI, the move signals confidence in Cerebras' technology and a willingness to diversify its infrastructure strategy. For the broader industry, it's a reminder that the future of AI isn't determined by model parameters alone—it's shaped by the silicon running underneath.