OpenAI Updates Agents SDK with Enhanced Security Features

OpenAI updates its Agents SDK with native sandboxing and model harness for secure, scalable AI agents.

OpenAI Updates Agents SDK with Enhanced Security Features

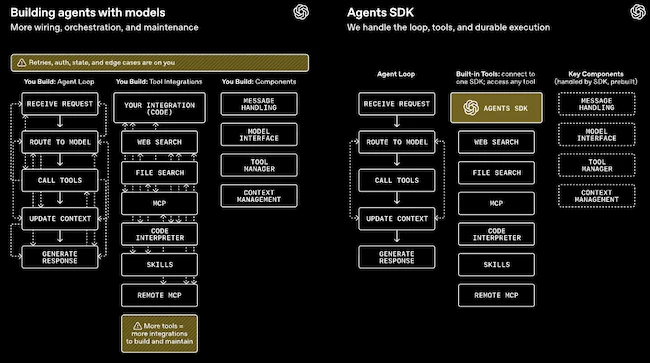

OpenAI has released a significant update to its Agents SDK on April 16, 2026, introducing native sandbox execution and a model-native harness. This update aims to enable developers to build more secure, durable, and scalable AI agents capable of handling files, tools, and extended workflows (OpenAI).

Key Features of the Updated Agents SDK

The update focuses on two primary components: a powerful model harness and a sandbox environment. These can operate on the same machine or separately for enhanced isolation, durability, and security. The harness equips agents with instructions, tools, approvals, tracing, handoffs, resume bookkeeping, and behaviors similar to Codex-style agents, ensuring coordinated handling of long-running tasks (OpenAI Community).

Sandbox Environment

The sandbox provides dedicated compute resources including files, commands, packages, artifacts, and isolation, keeping credentials and orchestration outside the model-generated code environment. Developers can customize workspaces via a Manifest file, which supports:

- Staging local files and cloning repositories.

- Creating output directories.

- Mounting external storage like S3, GCS, Azure Blob Storage, and Cloudflare R2.

- Git repository operations (OpenAI Community).

New sandbox-native capabilities include filesystem tools, shell access, skills, memory management, and compaction, allowing data to be brought into the sandbox rather than bloating model context windows. Permissions mirror Unix filesystem standards, enabling granular control (OpenAI Community).

Additional Enhancements

Additional enhancements include configurable memory to prevent chat history sprawl, Codex-like filesystem tools, MCP integrations, shell execution, and apply-patch-style file editing. The API remains backward-compatible, making adoption seamless for existing users (Junia AI).

Past Performance and Track Record

OpenAI's Agents SDK has evolved rapidly since its initial preview in late 2025. Early versions focused on basic tool-calling and short-session agents, but production deployments revealed limitations in handling stateful, long-running workflows. This update directly addresses those gaps, with early community tests reporting improved durability and faster iteration on complex agents (Junia AI).

Competitor Comparison

| Feature | OpenAI Agents SDK (Apr 2026) | Anthropic's Claude Agents | LangChain/LangGraph | Vercel AI SDK |

|---|---|---|---|---|

| Sandboxing | Native, Unix-like permissions, external storage mounts | Container-based, but less granular file perms | Custom via Docker, no built-in Manifest | Basic isolation, no shell/filesystem |

| State Durability | Snapshot/rehydration, memory compaction | Session resuming via API | Graph-based persistence | Ephemeral, relies on external DB |

| Harness/Tools | Model-native (tracing, handoffs, Codex-style) | Tool-use with approvals | Modular chains/graphs | React-like, UI-focused |

| Scalability | Multi-provider Manifest portability | Enterprise-focused, managed | Open-source, self-hosted | Edge-optimized, short tasks |

| Security | Credential isolation, user perms | Strong constitutional AI | Plugin-dependent | Vercel runtime limits |

OpenAI's update gives it an edge in developer control and portability over Anthropic's more enterprise-oriented agents, which prioritize safety but limit customization (Junia AI).

Strategic Context

This release arrives amid surging demand for production-grade AI agents in 2026, driven by enterprise adoption of models like o3. OpenAI faces pressure from Anthropic's Claude 3.5 agent tools and Google's Gemini agents, which have captured finance and devops markets. Sandboxing responds to high-profile incidents of agent escapes and regulatory scrutiny on AI safety (Junia AI).

Implications and Skeptical Views

For developers, the SDK lowers barriers to in-house agent builds, potentially disrupting managed services while enabling hybrid workflows across providers. Early adopters report 40% cost savings over custom runtimes (OpenAI Community).

Critics argue it still lacks a robust verification layer for model outputs, risking hallucinations in sandbox actions, and question if Manifest portability truly escapes OpenAI lock-in (Hacker News). Overall, this positions OpenAI as the go-to for secure, file-savvy agents, accelerating the shift from chatbots to autonomous systems in software engineering and beyond.