Speed Over Smarts: OpenAI's GPT-5.3 Codex Spark Redefines Real-Time Programming

OpenAI's new GPT-5.3 Codex Spark delivers 1,000 tokens per second on Cerebras hardware, challenging the assumption that faster AI models must sacrifice intelligence. The shift signals a fundamental change in how developers approach coding assistance.

The Speed Revolution in AI Coding

The race for AI coding dominance just shifted gears. While competitors obsess over model size and reasoning depth, OpenAI has released a research preview of GPT-5.3 Codex Spark, a 15x faster coding model that delivers over 1,000 tokens per second on Cerebras hardware. This isn't just an incremental improvement—it represents a fundamental rethinking of what matters in developer tools.

The conventional wisdom in AI development has long held that speed and capability exist in tension. Larger models think deeper but respond slower. Smaller models respond faster but with less nuance. Spark breaks this assumption by achieving both velocity and competence, according to data from the Cerebras-powered deployment.

Why Speed Matters Now

For developers, latency isn't a luxury concern—it's a productivity multiplier. The difference between 100 milliseconds and 10 milliseconds in code completion feels like the difference between a helpful assistant and a mind-reading partner. According to analysis from MarkTechPost, Spark achieves significant reductions in pipeline latency:

- 80% reduction in roundtrip overhead

- 30% reduction in per-token overhead

- 50% reduction in time-to-first-token

These aren't vanity metrics. They translate directly into uninterrupted developer flow—the state where coding feels effortless.

The Hardware Shift: Cerebras Over Nvidia

Perhaps the most striking aspect of Spark's launch is OpenAI's partnership with Cerebras, moving away from traditional GPU infrastructure. Cerebras' wafer-scale architecture, which packs an entire AI accelerator onto a single chip, eliminates communication bottlenecks that plague distributed GPU setups. This architectural choice directly enables the token-per-second throughput that makes Spark viable for real-time interaction.

The move signals something deeper: the AI infrastructure landscape is fragmenting. Nvidia's dominance in training and inference isn't absolute anymore, particularly for specialized workloads like code generation where latency matters more than raw parameter count.

Real-Time Programming: What Changes?

According to HelpNetSecurity's coverage, Spark enables genuinely interactive coding experiences that weren't possible before. Developers can expect:

- Instant code suggestions without the mental context-switch of waiting

- Real-time refactoring assistance that keeps pace with typing

- Live debugging support that responds as errors surface

This transforms AI coding from a batch-oriented tool ("generate this function") into a collaborative partner ("help me write this as I think").

The Competitive Landscape Heats Up

This week's AI updates show that speed is becoming the new battleground. GitHub's agentic workflows and other competitors are racing to match Spark's responsiveness. The question isn't whether faster coding models are possible—it's whether they can maintain the quality developers expect.

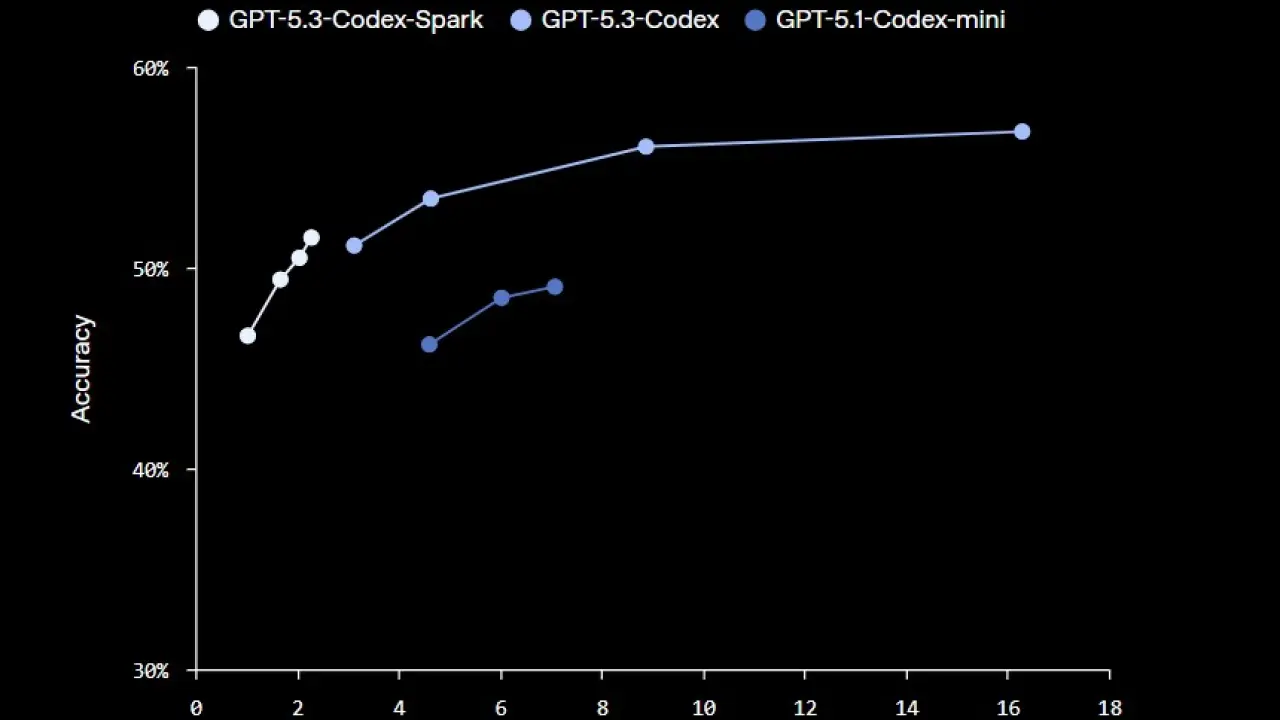

Early benchmarks suggest Spark doesn't sacrifice capability for speed, but real-world developer feedback will ultimately determine whether this represents a genuine leap forward or an incremental optimization.

What's Next

The research preview phase suggests OpenAI is still refining the model. Availability, pricing, and integration with existing developer workflows remain open questions. But the trajectory is clear: the era of "good enough, but slow" AI coding assistants is ending. The future belongs to tools fast enough to feel like an extension of thought itself.