The Double-Edged Sword: How AI Coding Tools Boost Productivity While Multiplying Security Risks

AI coding assistants promise faster development cycles, but new research reveals they're introducing critical security vulnerabilities that developers and enterprises must address immediately.

The Productivity Paradox

The race to automate software development has reached a critical inflection point. While AI coding tools are transforming how developers write code—slashing development time and reducing manual labor—they're simultaneously opening new attack vectors that enterprises are struggling to contain. The tension between speed and security has never been sharper.

Developers are embracing AI-powered coding assistants at unprecedented rates. These tools promise to accelerate feature delivery, reduce repetitive work, and democratize coding for less experienced developers. Yet beneath this productivity surge lies a troubling reality: AI-generated code often contains security flaws that human reviewers miss, and the compliance burden is intensifying across the open-source ecosystem.

The Security Challenge

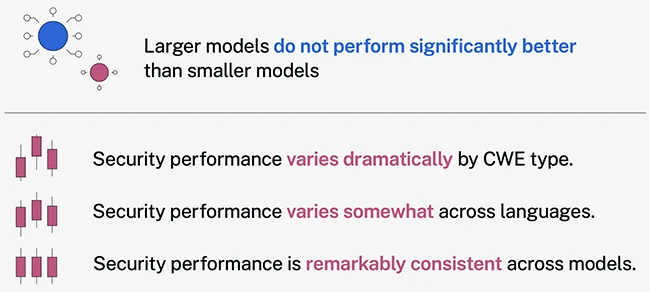

The core problem is straightforward: AI models trained on vast repositories of code—including vulnerable legacy code—can perpetuate those same vulnerabilities in new applications. According to security researchers, nearly half of AI-generated code may contain insecure patterns, from hardcoded credentials to SQL injection vulnerabilities.

Key security concerns include:

- Supply chain contamination: AI tools may inadvertently incorporate malicious or vulnerable dependencies

- Compliance gaps: Open-source maintainers face burnout as AI-generated contributions flood repositories without proper security vetting

- Lack of context awareness: AI models don't understand organizational security policies or threat models

- Licensing violations: Generated code may inadvertently violate open-source licenses

Industry Response and Best Practices

Security teams are beginning to establish guardrails. The Open Source Security Foundation has published guidance on AI software development security, emphasizing the need for rigorous code review, dependency scanning, and security testing.

Enterprise security leaders are predicting that AI-driven application security will become a critical investment area in 2026, with organizations implementing:

- Automated security scanning of AI-generated code

- Enhanced code review processes with security-focused reviewers

- Integration of software composition analysis (SCA) tools

- Regular security training for developers using AI assistants

The Tool Landscape

The market for AI coding agents is expanding rapidly, with vendors competing on speed, accuracy, and—increasingly—security features. However, selecting the right tools requires understanding both their productivity benefits and their security implications.

Organizations should evaluate AI coding tools not just on development velocity, but on their security posture: Do they flag vulnerable patterns? Can they be configured to enforce organizational policies? Are they transparent about training data sources?

The Path Forward

The future of AI-assisted development isn't about choosing between productivity and security—it's about integrating both. Product security leaders are increasingly focused on building secure AI development practices that leverage automation without sacrificing oversight.

The winners will be organizations that treat AI coding tools as force multipliers for their security teams, not replacements for human judgment. The stakes are too high for anything less.